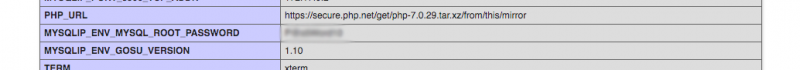

If you are using iDrac6 with your Mac. Good luck to you my friend as you will mostly keep getting connection failed on your Java application and this is VERY scary since you can't talk to your machine anymore! But there is a solution! (at least i figure one) so let's get started

Java Setup

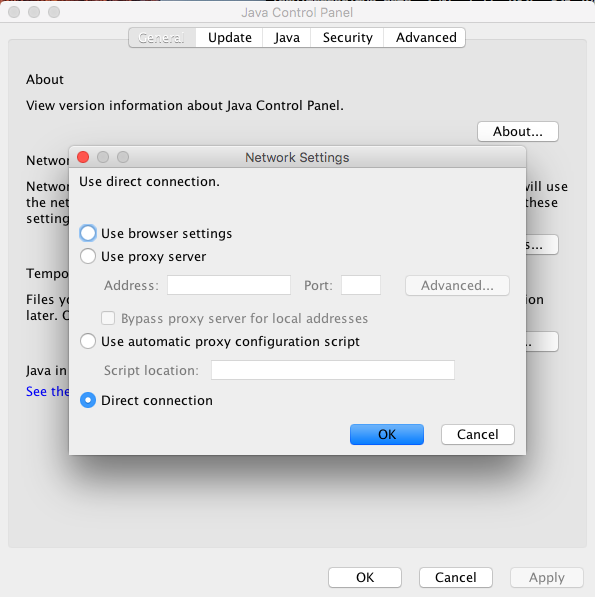

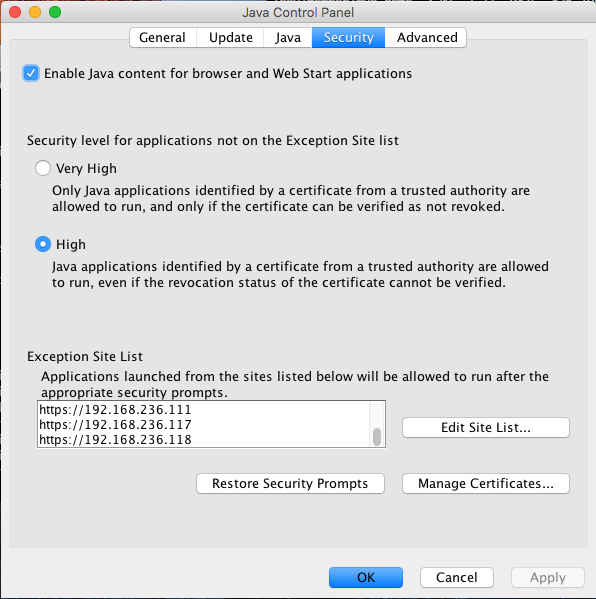

Firstly, you need to go to your Java Control Panel and do a few things and here are what you need to do on your Mac Java Control Panel as show below,

Once you've added to the exception site list and set your connection to direct. You'll need to go to your terminal to edit Java Security located at

sudo vi /Library/Internet Plug-Ins/JavaAppletPlugin.plugin/Contents/Home/lib/security/java.security

open it up with vim and look for SSLv3 and comment it out

#jdk.tls.disabledAlgorithms=SSLv3, RC4, MD5withRSA, DH keySize < 1024, \

# EC keySize < 224, DES40_CBC, RC4_40, 3DES_EDE_CBC

i need to remove both lines in this case. Once you're done. save it and we are done with Java security tweak!

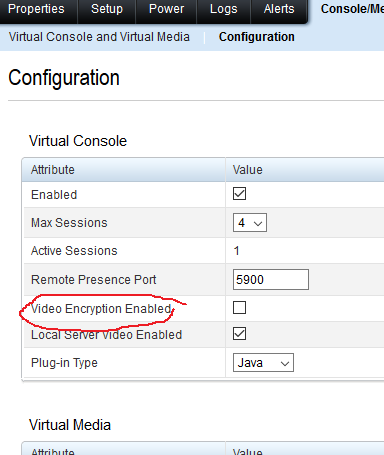

iDrac Changes

Firstly you need to head over to Console/Media and disable Video Encryption

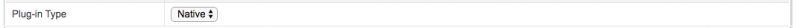

and change 'Plug-in Type' to 'Native'

Click on apply and you are done. Now try again.